data center solutions

data center solutions

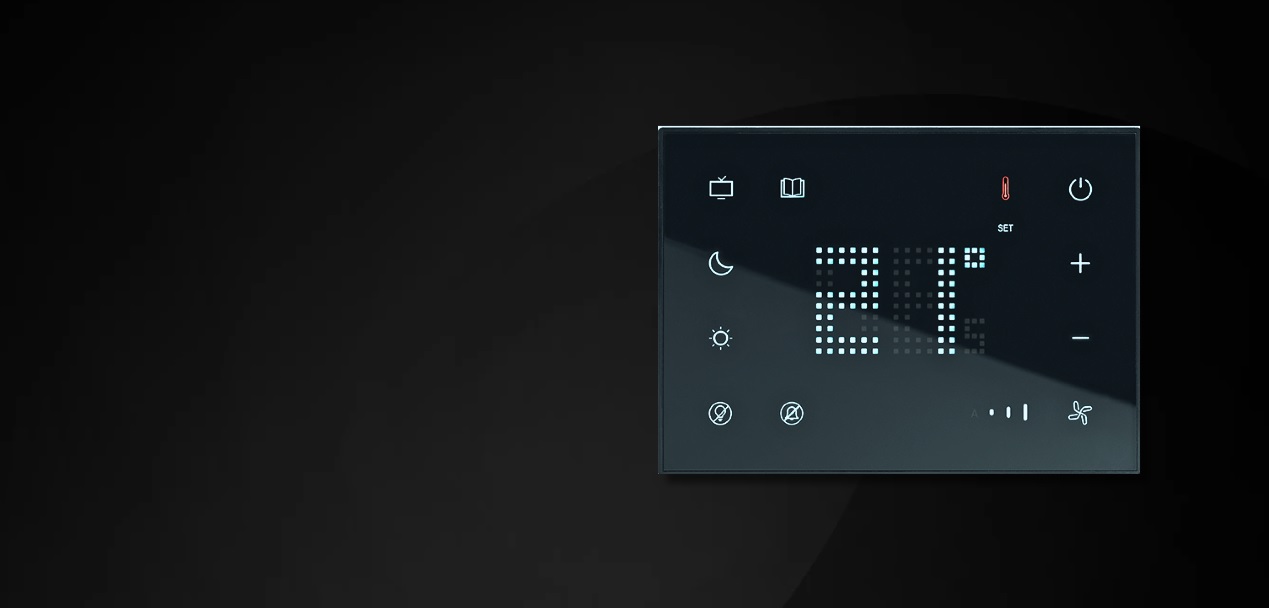

Multifunctions control for scenes control, DND/MUR management and temperature control

KNOW MORE

Intelligent solutions for a connected tomorrow

Range of accessories to enrich your living environment with enhanced IoT - based user

data center solutions

data center solutions

A few years ago, building for the cloud was relatively straightforward. You picked a provider, scaled as needed, and optimized along the way. That simplicity is gone. In 2026, AI has changed the rules. Not just for software but for the infrastructure behind it. What used to be a single decision is now a series of trade-offs. Training versus inference. Cost versus performance. Speed versus control. And increasingly, one environment is not enough. The result is a fragmented ecosystem of AI infrastructure models, each solving a different part of the problem. And that shift is quietly redefining how data centers need to be designed.

AI Workloads Are No Longer Predictable

One of the biggest changes is that AI workloads behave very differently from traditional IT. Some environments demand extreme compute density clusters running at levels that would have been unthinkable a few years ago. Others prioritize low latency, serving responses in milliseconds across distributed locations.

This creates a fundamental challenge: infrastructure can no longer be designed for a single steady state.

Six Ways AI Infrastructure Is Taking Shape

Instead of one dominant model, six distinct approaches have emerged. Not as competitors but as pieces of a larger puzzle.

Hyperscalers: Still the Default Starting Point

For many organizations, the journey still begins here.

These platforms bring familiarity, compliance, and deep integration with existing systems. But as AI demands grow, they can feel slower to adapt especially when scaling large GPU environments.

From an infrastructure perspective, this means designing for stability but also for extension beyond a single cloud.

Neoclouds: Built Around Performance

A newer category has emerged with a clear focus: raw AI performance. These environments are designed to deliver high-density compute quickly, making them ideal for training workloads.

But high performance comes with infrastructure consequences. Power demand rises sharply. Density increases. Expansion needs to happen without downtime. This is where flexible distribution systems such as modular busbar architectures start to make a real difference. They allow capacity to grow without rebuilding the entire system.

Developer Clouds: Lower Barriers, Faster Start

Not every team needs massive scale. Some need speed. Simplicity. The ability to start quickly without managing complexity.

These platforms support that, but they also highlight a different infrastructure need: scalability without overcommitment. Instead of building for peak from day one, infrastructure needs to grow in steps. Modular designs make that possible.

Inference Platforms: Where AI Becomes Real-Time

If training builds the model, inference is where value is delivered. And this is where things get interesting. Inference workloads are distributed, latency-sensitive, and often always-on. They cannot afford instability.

That shifts the focus from raw compute to reliability - power quality, uptime, and intelligent monitoring become critical. Because even a short disruption can impact thousands of real-time interactions.

GPU Marketplaces: Flexibility with Trade-offs

At the other end of the spectrum are marketplaces offering access to GPUs at lower cost. They enable experimentation and flexibility, but often with less consistency.

Which makes one thing clear: even when compute is decentralized, infrastructure stability still determines performance.

Orchestration Layers: Making It All Work Together

As complexity increases, abstraction becomes necessary.

These layers allow workloads to move across environments optimizing for cost, performance, or availability. But this introduces a new expectation: infrastructure must keep up with that movement. It cannot be rigid. It cannot assume fixed loads. It must respond.

The Real Shift Is Physical, Not Just Digital

Much of the conversation around AI infrastructure focuses on software and cloud. But the real pressure is being felt in the physical layer. Power systems are being pushed harder. Rack densities are increasing. Load patterns are becoming less predictable.

Infrastructure is no longer a background function. It is an active enabler. Legrand Data Center Solutions are designed with this reality in mind supporting environments where requirements change faster than traditional systems were built to handle.

From scalable power distribution to intelligent monitoring, the focus is not just on supporting today’s load but adapting to what comes next. Because in AI environments, infrastructure that cannot evolve quickly becomes a constraint.

Designing for an Uncertain Future

If there is one clear takeaway, it is this: there is no single “right” infrastructure model anymore. It is easy to focus on GPUs, providers, or cost.

But over time, the real advantage comes from something less visible: how well the infrastructure supports change.

Can it scale without downtime?

Can it handle higher density without redesign?

Can it adapt as workloads evolve?

These questions are becoming just as important as performance benchmarks.

Looking Ahead

AI infrastructure will continue to evolve. Categories will blur. New models will emerge.

But one thing is clear. The data centers that succeed will not be the ones optimized for a single scenario. They will be the ones designed for flexibility.

At Legrand, this means building systems that do not just support infrastructure but help it adapt.

Because in a landscape defined by constant change, the ability to evolve is what keeps everything running.

We go further, so your data center can too.

Upgrade your holiday security with a wireless video door phone this Christmas – enter 2025 with a secure home

READ SMART SPACE

Loading Social Feed...

Unlock insights that can help you stay ahead in industry. This whitepaper covers key trends, actionable strategies, and expert recommendations to drive growth and innovation.

Fill in the details to create your account

Fill in the details to login into your account